{‘rendered’: ‘

If you’ve ever sat in a boardroom as a CEO or senior leader, you’ve probably noticed the tension when the conversation turns to AI. There’s excitement, but also skepticism—directors want to know what’s real, what’s hype, and how your AI vision translates to long-term value. Investors, meanwhile, are pressing for clarity on risks, returns, and the practical steps you’re taking. By the end of this article, you’ll understand how to move beyond speculative AI narratives and communicate a credible, actionable AI strategy that earns trust from both boards and investors. PwC estimates that AI could contribute up to $15.7 trillion to the global economy by 2030, with leadership development and coaching emerging as high-impact AI application areas.

\n

\n

Why Does Communicating AI Strategy Matter More Than Ever?

\n

The stakes for AI communication at the board and investor level have never been higher. As organizations race to adopt AI, the gap between technical ambition and boardroom understanding is widening. According to Deloitte, nearly a third (31%) of boards say AI is not on the board agenda, though this is a marked improvement from 45% in the previous survey (Deloitte, 2025). This signals progress, but also highlights that many boards are still catching up.

\n

Here’s the thing: most teams assume that simply mentioning AI in their strategy is enough to satisfy stakeholders. But research consistently demonstrates that boards and investors are demanding substance—clear articulation of risks, governance, and measurable outcomes. The pressure is on CEOs to bridge this gap with communication that is both visionary and grounded. Brandon Hall Group research reveals that companies with strong coaching cultures are 130% more likely to achieve strong business results and significantly higher employee engagement.

\n

\n

What Are the Core Challenges in Board-Level AI Communication?

\n

Let’s be honest—AI is a moving target. The technology evolves rapidly, and so do the expectations of boards and investors. Yet, the fundamental challenge remains: translating technical complexity into business relevance.

\n

- \n

- AI Fluency Gap: Despite the buzz, 66% of boards still have “limited to no knowledge or experience” with AI, though this is an improvement over 79% in the previous survey (Deloitte, 2025). This means most directors are not equipped to ask the right questions or challenge assumptions, making it easy for hype to overshadow substance.

- Risk Perception: Over half (53%) of investors raised concerns about AI’s impact on the workforce, particularly the risk that the loss of junior talent could lead to “brain drain” (EY, 2026). This shows that investors are looking beyond technical feasibility to the broader organizational and social implications.

- Governance Gaps: Only 29% of organizations have comprehensive AI governance plans in place (Diligent, 2025). Without robust governance, even the most promising AI initiatives can falter, eroding stakeholder trust.

\n

\n

\n

\n

Most CEOs assume that a well-crafted technical roadmap will speak for itself. But the reality is that effective communication requires translating this roadmap into language that resonates with non-technical stakeholders, addresses their concerns, and demonstrates alignment with long-term business goals.

\n

\n

\n

\n

How Can CEOs Translate AI Vision Into Board and Investor Language?

\n

Most executive teams believe that technical fluency is a prerequisite for board-level AI discussions. But research shows that what boards and investors value most is clarity, transparency, and a direct link between AI initiatives and business outcomes.

\n

Let’s break down the essentials of effective AI communication at the board level:

\n

1. Frame AI as a Strategic Enabler, Not a Silver Bullet

\n

Boards are increasingly aware that AI isn’t a plug-and-play solution. They want to see how AI fits into the broader business strategy and how it will drive sustainable value. When presenting your AI strategy, focus on:

\n

- \n

- Business Alignment: Explain how AI initiatives support core business objectives, such as improving customer experience, optimizing operations, or unlocking new revenue streams.

- Realistic Timelines: Set expectations around the pace of AI adoption and the milestones that will signal progress. Avoid overpromising on short-term results.

- Tangible Outcomes: Use specific examples and case studies to illustrate how AI investments will deliver measurable impact.

\n

\n

\n

\n

2. Address Risks and Governance Head-On

\n

Risk management is top of mind for boards and investors. With 60% of legal, compliance, and audit leaders citing technology as their top risk concern (Diligent, 2025), it’s crucial to demonstrate that you have robust AI governance in place.

\n

- \n

- AI Governance: Articulate the frameworks and policies guiding your AI initiatives. Reference industry standards and regulatory requirements to show alignment with best practices. For foundational knowledge, see this resource on AI governance.

- Ethical Considerations: Discuss how you’re addressing issues such as bias, transparency, and data privacy. Boards want to know that you’re not just compliant, but proactive in managing ethical risks.

- Continuous Oversight: Outline the mechanisms for ongoing monitoring, reporting, and adaptation as AI technologies and regulations evolve.

\n

\n

\n

\n

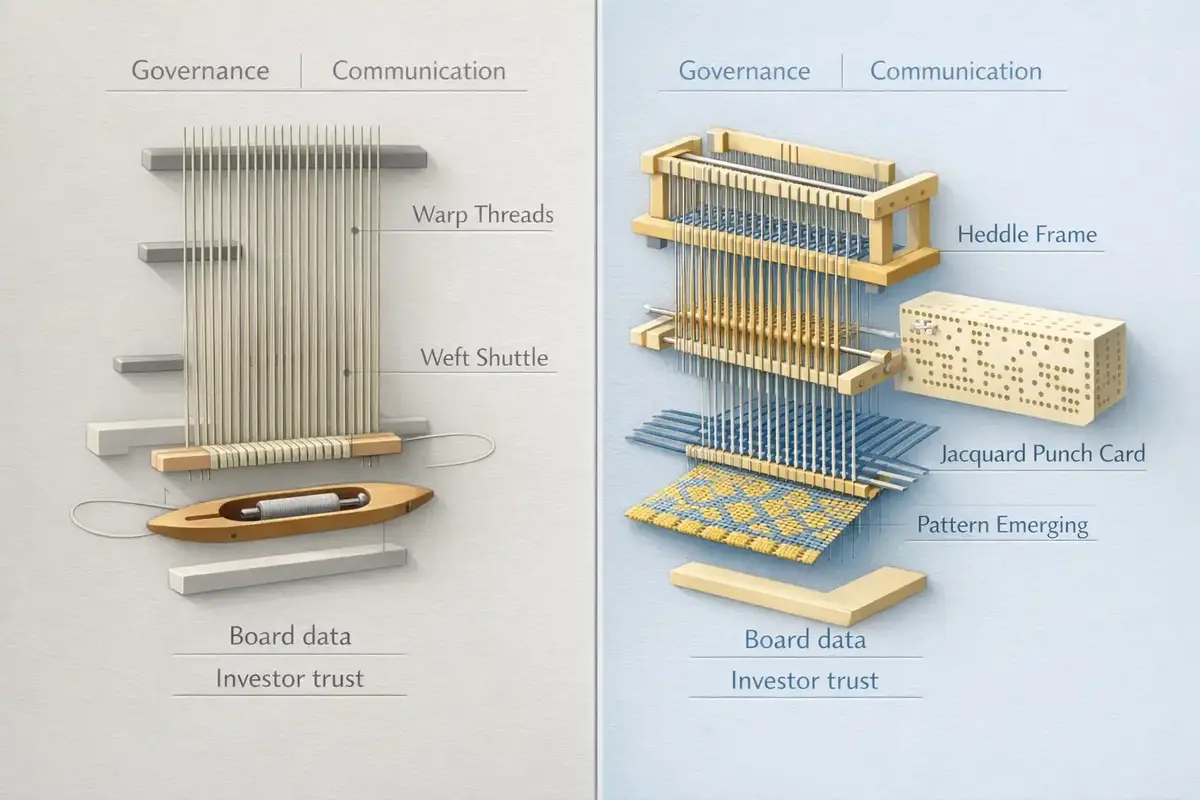

3. Make the Complex Understandable

\n

One of the most common pitfalls is overwhelming stakeholders with technical jargon. Instead, use storytelling, visuals, and analogies to make AI concepts accessible.

\n

- \n

- Storytelling: Share real-world anecdotes that illustrate both successes and challenges. This makes the risks and rewards of AI tangible.

- Visual Aids: Use diagrams, infographics, and dashboards to simplify complex data and highlight key trends.

- Glossary: Provide a simple glossary of AI terms to level the playing field for all board members.

\n

\n

\n

\n

4. Build AI Literacy as an Ongoing Boardroom Habit

\n

Here’s a perspective shift: most organizations treat board-level AI education as a one-off event. But with 66% of boards still lacking experience, ongoing AI literacy programs are essential for informed oversight.

\n

- \n

- Regular Briefings: Schedule periodic updates and workshops to keep the board abreast of AI developments.

- Peer Learning: Encourage directors to share insights and lessons learned from other organizations or industries.

- External Experts: Bring in outside advisors or coaches to provide independent perspectives and challenge groupthink.

\n

\n

\n

\n

\n

What Are the Board’s Responsibilities for AI Oversight?

\n

Boards are not just passive recipients of AI updates—they have a fiduciary duty to provide oversight and stewardship. This includes:

\n

- \n

- Strategy Approval: Ensuring that AI initiatives align with the company’s long-term vision and risk appetite.

- Risk Management: Challenging management on the adequacy of controls, especially around third-party AI tools and data handling.

- Talent and Culture: Overseeing how AI impacts workforce composition, skills development, and organizational culture.

- Regulatory Compliance: Staying informed about evolving regulations and ensuring the organization is prepared for compliance. For more on aligning board communication with compliance, see AI regulatory compliance.

\n

\n

\n

\n

\n

Interestingly, two out of five (40%) respondents say AI has caused them to think differently about their boards’ makeup (Deloitte, 2025). This means boards are actively reassessing their skills and composition to meet the demands of AI oversight.

\n

\n

\n

\n

How Do You Move from Hype to Habits in Boardroom AI Communication?

\n

Most organizations treat AI as a headline topic—something to be discussed once and then left to the technical team. But as AI becomes embedded in business processes, it’s essential to make AI progress and governance a standing item on the board agenda.

\n

Embedding AI into Boardroom Routines

\n

- \n

- Standing Agenda Item: Ensure that AI strategy and risk updates are a recurring part of board meetings, not just an annual presentation.

- Progress Tracking: Use dashboards and key performance indicators (KPIs) to track AI project milestones, ROI, and risk metrics over time.

- Scenario Planning: Regularly review “what if” scenarios to anticipate potential AI failures or regulatory changes.

\n

\n

\n

\n

Using Real-World Data to Drive Accountability

\n

Drawing on TII’s two-decade integral methodology, we see that boards who integrate AI oversight into their regular routines are better positioned to manage both risks and opportunities. For example, when boards track AI literacy and agenda time, they can identify gaps early and invest in targeted education or external support.

\n

\n

How Should CEOs Tailor AI Narratives for Different Investor Personas?

\n

Not all investors are created equal—some are focused on innovation and growth, while others prioritize risk mitigation and regulatory compliance. The most effective CEOs customize their AI communication to resonate with these different personas.

\n

Understanding Investor Concerns

\n

- \n

- Workforce Impact: As noted, over half of investors are worried about AI-driven “brain drain” (EY, 2026). Address this head-on by outlining your talent strategy and plans for upskilling or redeployment.

- Long-Term Value: Highlight how AI investments will drive sustainable growth, not just short-term cost savings.

- Transparency: Provide clear disclosures about AI risks, failures, and lessons learned. Investors appreciate candor over glossy narratives.

\n

\n

\n

\n

Customizing Communication

\n

- \n

- Risk-Averse Investors: Emphasize governance, compliance, and risk management frameworks. For more on this, see AI risk management.

- Growth-Oriented Investors: Focus on innovation pipelines, market differentiation, and the role of AI in capturing new opportunities.

- Stewardship Models: Align your narrative with investors’ stewardship priorities, such as ESG, workforce development, or ethical AI.

\n

\n

\n

\n

\n

\n

\n

What Are the Most Common Pitfalls in Board-Level AI Communication?

\n

Most teams assume that more data and technical detail will build credibility. But in practice, this can backfire—overwhelming stakeholders and obscuring the strategic message.

\n

Pitfall 1: Overhyping AI Capabilities

\n

Boards and investors can spot inflated promises a mile away. When expectations are set too high, even modest setbacks can erode trust. Instead, focus on incremental wins, lessons learned, and transparent reporting.

\n

Pitfall 2: Neglecting Governance and Risk

\n

With only 29% of organizations having comprehensive AI governance plans (Diligent, 2025), there’s a real risk that AI projects will outpace the controls needed to safeguard the business. Make governance a central part of your narrative, not an afterthought.

\n

Pitfall 3: One-Size-Fits-All Communication

\n

A generic AI update won’t resonate with all stakeholders. Tailor your message to address the unique concerns and priorities of your board and investor base. For more on adapting communication strategies, see customizing AI coaching.

\n

\n

How Can Boards Build and Sustain AI Literacy?

\n

Building AI literacy isn’t a box to tick—it’s a continuous journey. With 66% of boards still lacking experience (Deloitte, 2025), it’s clear that ad hoc education isn’t enough.

\n

- \n

- Structured Learning: Implement regular training sessions, workshops, and peer discussions to keep the board informed.

- Resource Libraries: Curate a library of articles, case studies, and glossaries for ongoing reference.

- Feedback Loops: Encourage directors to ask questions and challenge assumptions, creating a culture of curiosity and accountability.

\n

\n

\n

\n

\n

What Practical Tools Can CEOs Use to Communicate AI Strategy?

\n

Effective communication is as much about process as it is about content. Here are some practical tools and templates to support your board and investor conversations:

\n

- \n

- AI Strategy One-Pager: Summarize your AI vision, key initiatives, risks, and expected outcomes in a single, visually engaging document.

- Risk and Governance Checklist: Provide a checklist of governance practices, regulatory requirements, and risk controls for board review.

- Progress Dashboards: Use dashboards to visualize AI project milestones, ROI, and risk indicators over time.

- Scenario Playbooks: Develop playbooks for potential AI failures or regulatory changes, outlining response plans and escalation paths.

- Glossary and FAQ: Equip board members with a simple glossary of AI terms and a living FAQ to demystify jargon.

\n

\n

\n

\n

\n

\n

\n

How Can Boards Integrate Regulatory Compliance Into AI Oversight?

\n

As global AI regulations evolve, boards are expected to play a proactive role in ensuring compliance. Yet, 59% of board members were not aware of AI-related regulations (Harvard Law School Forum on Corporate Governance, 2024). This underscores the need for clear, regular updates on regulatory developments and their implications.

\n

- \n

- Regulatory Mapping: Provide regular briefings on relevant regulations (EU AI Act, NIST, ISO/IEC 42001) and their impact on your AI initiatives.

- Compliance Integration: Embed compliance requirements into your board communication templates and reporting processes.

- Expert Input: Engage legal and compliance experts to interpret regulatory changes and guide board discussions.

\n

\n

\n

\n

\n

FAQ: Beyond Hype—Communicating AI Strategy to Boards and Investors

\n

How can I explain AI strategy to a non-technical board?

\n

Focus on business outcomes, not technical details. Use clear analogies, real-world examples, and visuals to show how AI supports core business goals. Emphasize risk management and governance to build confidence, and provide a simple glossary to demystify jargon.

\n

What are the top concerns investors have about AI?

\n

Investors are most concerned about workforce impact, risk management, regulatory compliance, and long-term value creation. Over half (53%) specifically worry about AI causing “brain drain” by displacing junior talent (EY, 2026).

\n

How often should AI be on the board agenda?

\n

AI should be a standing agenda item, discussed at least quarterly. Regular updates ensure ongoing oversight, allow for timely risk management, and help the board stay informed about progress and regulatory changes.

\n

What frameworks help boards oversee AI risk?

\n

Boards should use established AI governance frameworks that include risk identification, mitigation controls, compliance mapping, and continuous monitoring. Reference industry standards and regulatory guidelines to ensure best practices.

\n

How do I measure and communicate AI ROI to the board?

\n

Tie AI investments to clear business metrics—such as cost savings, revenue growth, or customer satisfaction. Use dashboards to track progress, and be transparent about both wins and setbacks. Set realistic expectations about timelines and outcomes.

\n

What’s the best way to build AI literacy at the board level?

\n

Implement ongoing education through workshops, expert briefings, and curated resource libraries. Encourage peer learning and create space for questions and discussion. Continuous learning is key to effective oversight.

\n

How do I address ethical and regulatory concerns in board communications?

\n

Be proactive in discussing ethical risks, such as bias and data privacy, and provide regular updates on regulatory developments. Integrate compliance requirements into board reports and seek expert guidance when needed.

\n

\n

Continue Your Leadership Journey

\n

Moving beyond hype in AI strategy communication isn’t about downplaying ambition—it’s about building trust through clarity, transparency, and continuous learning. By translating technical vision into board-level language, addressing risks head-on, and embedding AI governance into boardroom routines, CEOs can earn the confidence of both directors and investors. As the landscape evolves, those who make AI literacy and stewardship part of their organizational DNA will be best positioned to unlock AI’s long-term value.

\n’, ‘protected’: False}